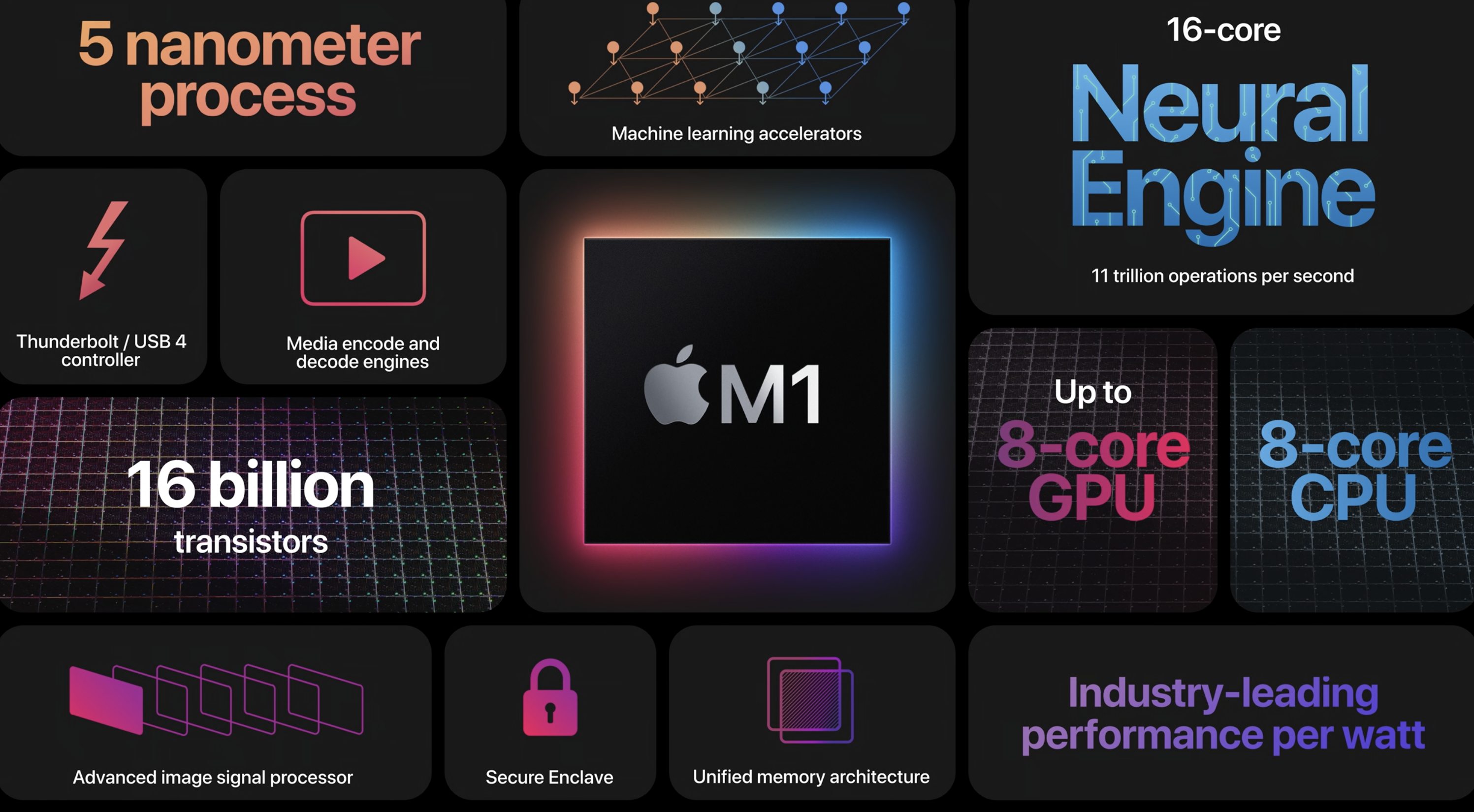

Join Compositor BCI-modem Beta-test program Compositor neurological chipset is a set of two programs that helps in machine learning on Apple M1, M2 platforms. Each of the patches performs the function of supporting device training, so that the final result of the training meets your expectations. The RTC4k patch allows you to ensure reliable synchronization with Apple NTP servers, which…